Scaling Lucene and Solr

Need to scale solr? Here's how to configure to manage large indexes and high volume queries.

While many Lucene/Solr applications will never outgrow a single, well-configured machine, the fact is, more and more applications are pushing beyond the single machine limit due to either index size or query volume. In discussing Lucene and Solr best practices for performance and scaling, Mark Miller explains how to get the most out of a single machine, as well as how to harness multiple machines to handle large indexes, large query volume, or both.

Introduction

For the less acquainted, Lucene is a very compact and powerful search library while Solr is an enterprise search engine built on top of the Lucene library. Lucene gives you killer information retrieval core technology in a compact package, and Solr builds out features on top, including: a platform independent interface, faceting, replication, caching, large scale distributed search, and much more. This article assumes you are familiar with the Lucene/Solr basics, but should be fairly accessible to those that are investigating the scalability of the Lucene Stack.

Lucene and Solr are both highly scalable search solutions. Depending on a multitude of factors, a single machine can easily host a Lucene/Solr index of 5 – 80+ million documents, while a distributed solution can provide subsecond search response times across billions of documents. Over that range, query throughput can be adjusted with index replication at each individual server.

The standard procedure for scaling Lucene/Solr is as follows: first, maximize performance on a single machine. Next, absorb high query volume by replicating to multiple machines. If the index becomes too large for a single machine, split the index across multiple machines (or, shard the index). Finally, for high query volume and large index size, replicate each shard (a shard is a server in a distributed configuration).

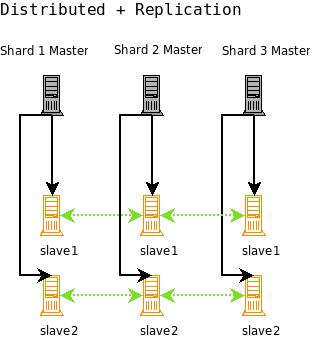

In the Scaling Progression diagram, you can get a better visual idea of how this progression works. It starts with a single machine serving all queries and handling all index updates. Next there is the master/slave configuration, where the master handles all updates and replicates all index changes to the slaves. The slaves for the master handle all queries. You can also split an index across multiple machines (called shards when using distributed Solr), where each shard will handle index updates and queries. Finally, you can set up each shard for replication, again where each shard master handles updates, and all of the slaves for each shard handles queries.

These are the four best practice configurations for growing a Lucene/Solr application.

Maximizing a Single Server

The key to maximizing performance with Lucene/Solr is configuration. The Lucene/Solr developers aim to provide great out-of-the-box performance for the typical use case, but proper tuning for your specific environment can bring significant performance improvements. There are many variables in play for any Lucene/Solr installation, and there are many configuration and architectural considerations you should be thinking about that depend on those variables. What that means, practically, is that you have to test most things for your specific environment to figure out what works best. It’s not all bad news though – there are many generalizations to be made, and many tips to be learned as you are trying to figure out how to eke out maximum performance. I’m going to cover some of the ideas that play a role in search performance and you should take those ideas, learn more in depth about the ones that apply to your situation (start with the links at the end of this article), and then tweak and test to see if you are meeting your requirements. Lucene provides a very powerful benchmark module in the contrib section that you might find useful.

There are two major areas of performance: the search side and the indexing side. This article concentrates on things that affect search side performance, but also touches on indexing decisions that play a role in search performance.

Manage Your Index

It’s important to set up your index from the beginning with performance in mind. Be sure to choose the right settings at the start to avoid having to do a complete re-index of your content, which can be rather time consuming when you are dealing with large scales indexes. It’s also important to consider how you are going to maintain your index – Lucene/Solr give you a lot of control over index structure, and it’s up to you to use that power for best performance.

Term Frequencies

Depending on your data, many fields can benefit from using Fieldable.omitTf (Boolean). This indexes the field without term frequencies, positions, or payloads. This will often make sense on either very short fields, or non full text fields. Performance is improved by dropping non useful data structures. This optimization was only recently added to Lucene, and is not available in Solr yet, but is being worked on in SOLR-739 and should be available soon.

Norms

Use omitNorms wherever it makes sense. Norms allow for index time boosts and field length normalization. This allows you to add boosts to fields at index time and makes shorter documents score higher. Just as with omitTf, this may not be useful for short or non full text fields. Norms are stored in the index as a byte value per document per field. When norms are loaded up into an IndexReader, they are loaded into a byte[maxdoc] array for each field – so even if one document out of 400 million has a field, it is still going to load byte[maxdoc] for that field, potentially using a lot of RAM. Considering turning norms off for certain fields, especially if you have a large number of fields in the index. Any field that is very short (i.e. not really a full text field – ids, names, keywords, etc) is a great candidate. For a large index, you might have to make some hard decisions and turn off norms for key full text fields as well. As an example of how much RAM we are talking about, one field in a 10 million doc index will take up just under 10 MB of RAM. One hundred such fields will take nearly a gigabyte of RAM. You can omit norms with Lucene when adding a field to a document and with Solr by using the correct field definition in your Schema.xml file.

Lazy Field Loading

When Lucene and Solr load a Document from the index (say for highlighting and hit display), all of the stored fields for that Document are loaded at once. If you are not always using all of the fields from the Document, and you have a lot of them, some of them large, you can get a speed boost by using a FieldSelector in Lucene, or using Lazy field loading in Solr (which uses a FieldSelector under the covers). This allows Lucene to skip unneeded fields as it loads the document. This isn’t always a savings if there is not much to skip, but in the right circumstances, this can lead to a dramatic improvement in stored field loading performance. Consider the case where you have stored a variety of small fields for hit list display, but also a few large fields holding all of the original content, say for document display. When loading the hit list fields, you can save a lot of time by skipping the large content fields.

Stop Words

As you approach the upper limits of a single machine, extremely frequent terms (called stop words) can become very expensive in the wrong query. If part of a top level BooleanQuery, a SHOULD clause that appears in every document will cause a match and score for every document in your index. While the performance for standard queries on an index that is pushing the limits of a machine might still be subsecond, you can find that queries that match most of the documents in the index can be very detrimental to performance. In my experience, the difference can be as dramatic as going from a subsecond response time to over 10 seconds on a very large index (over 10 million documents, not cache hits). If you choose to remove stopwords at index time (not usually recommended), and you are forced to work near the limits of a single machine, be sure to consider your stop word list well. If you choose not to remove stop words (most still find them useful for phrase searching at least), consider providing an option to remove stop words at query time. It might be best to only remove them if they are a top level OR clause in a top level BooleanQuery. There is an Analyzer in Lucene’s contrib area that performs query time stop word removal: org.apache.lucene.analysis.query.QueryAutoStopWordAnalyzer. Be sure to test a little for your situation – Lucene will often surprise you when it comes to performance. If you want to build a really large scale installation with stopwords, you can improve phrase search performance by looking into more efficient indexing schemes (such as indexing stop words as bi-grams / bi-words to create rarer terms). Two other options are to build a distributed setup where each machine can hold a smaller index, or buy a new server that dwarfs that can handle the volume.

Index Optimization

A Lucene index is made up of 1-n segments. A single segment can almost be treated as a single Lucene index itself, but not quite. A single segment is just short of a self contained inverted index. The segmented nature of a Lucene/Solr index allows for efficient updates, because rather than changing existing segments, you can just add new ones. Then, over time, these segments can be merged together for more efficient access. Obviously, the more segments you have to search across, the slower the search. If you are not using the compound file format, fewer segments also means many fewer open files. This helps keep your operating system from throwing ‘Too Many Open Files’ exceptions when your index gets large. If you do get those exceptions, you might need to raise the open file limit on your OS, or keep the number of segments down using the techniques below. Of course, for a small performance penalty, you can also use the compound file format (Lucene and Solr default to this), which writes out segments in a single file, significantly reducing the number of files in your index.

When you optimize a Lucene index, each individual index segment will be merged into one large segment. This makes searching more efficient – not only are fewer files touched, but with a single segment, Lucene can skip many small steps that are necessary to treat multiple segments as a single index. If you are using a FieldCache (say for sorting), these small steps severely impact IndexReader FieldCache loading. This is likely to be fixed in upcoming releases, but until then, it means an optimized index is currently very beneficial for FieldCache loading. See the discussion in LUCENE-1483.

Optimizing in both Lucene and Solr is an I/O intensive operation, and on a large index, it can actually take some time to complete. You might also consider issuing a partial optimize. With a partial optimize, you can tell Lucene/Solr how many resulting segments you want. This allows you to improve search speed, perhaps a step at a time, without committing to a full optimization down to a single segment.

Another strategy for maintaining a low segment count is to use a low merge factor on your IndexWriter when adding to the index. The merge factor controls how many segments your index needs to span. Using a value of lower than 10 can help keep searches nice and fast. The tradeoff is that additions to the index will now take a bit longer as more merging has to take place more often to keep the segment count low. For example, with a mergefactor of 2 (the lowest allowed value), you would never have more than two segments.

Manage Your Memory

A large index can require a lot of RAM. You should familiarize yourself with some of the structures in Lucene/Solr that can take significant resources in order to best manage your Lucene/Solr environment. If you do not properly understand how much RAM is needed and how it should be allocated, you are likely to run into numerous performance problems.

Lucene Caches

In a large scale search application, caches can become very important. It is a common theme in performance to try and avoid disk IO. Lucene provides limited out-of-the-box support for caching and you may want to build out a caching layer yourself. That’s exactly what Solr has chosen to do.

FieldCache

Lucene uses FieldCache to efficiently access all of the values for a field in memory rather than going to disk. This is necessary for sorting and can be used for Solr’s faceting, among other things. For a large index, the FieldCache can require a fair amount of RAM, especially if you load one for many fields (if you sort on many fields for example). Understanding out of memory errors related to FieldCaches has been a common issue for many Lucene/Solr users.

A FieldCache caches the value (and possibly ordinal) for every document in the index in memory. This allows for fast comparisons on a value for a given Document field. An ordinal simply indicates order, and might be used for something like Strings. Instead of Tom, Dick, Clark, you might use 3, 2, 1 – sorting will be faster, while maintaining the right order. For other types (integer, long, etc), the value itself can be a good ordinal as well.

Most of a FieldCache is simply an array, the size related to how many documents are in the index (including deleted docs that have not been merged out). So if you are sorting on a long field for an index with 10 million documents, that will load 10 million longs into a long[] array: That is approximately 76.29 megabytes. Multiply that by the number of long fields that a FieldCache is built on to get your total long FieldCache memory usage. Repeat for your other field types to get an idea of total usage. Another example: An int[] array on a 100 million document index will consume over 380 megabytes.

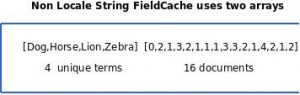

The String type is a bit more complicated than the others. If you have a non locale String-based FieldCache (that is, you are sorting on a String field, but you are not supplying a Locale for String comparisons), an array of all of the unique terms in the index (String[]) will be loaded and then a second array of integers will be loaded for each document in the index. The second array is full of ordinals that index into the unique terms array. This is less efficient access for the values (two array dereferences), but in the single IndexReader case, it allows you to sort using integers rather than Strings, as you can compare using the ordinal array of integers. If you supply a locale for the String FieldCache, a String[] array is filled with the term from each document for that field in the index, just like the other primitive types. Ordinal compares will not work when you are using a locale. The String[] representation will save an index into an array on lookup, but its still slower because you have to compare Strings rather than integer ordinals when sorting.

This shows the main RAM eating data structures for a String FieldCache that does not compare using a Locale. The first array contains all of the unique terms in the index for the FieldCache field, and the second array is an index into the first for each of the documents in the index. As you can see, you can sort the document just using comparisons of the integers in the second array. You can’t do this with a MultiSearcher however – ordinals from different indexes cannot be usefully compared.

Filters

Lucene comes with a CachingWrapperFilter that will cache Lucene Filters, with the Filter tied to the life of an IndexReader. The first time the Filter is used, it will be somewhat slow as it calculates which documents match the filter, and then caches the results in a WeakHashMap. Subsequent requests will skip those steps though, and perform quite fast, working directly with the cached Filter. If you combine the CachingWrapperFilter with a QueryWrapperFilter, you can pretty efficiently and easily screen out any set of documents you’d like.

Documents

It is also a good idea to cache Lucene Documents. The Document class in Lucene provides access to stored fields, and when Lucene has to go to disk to read these, it really can be quite inefficient. Providing stored fields for hit list displays on a highly trafficked site can quite quickly get bogged down going to disk. The Hits class in Lucene used to provide limited Document caching, but that class has been deprecated in favor of the TopDoc API’s, so it’s pretty much a roll your own Document cache affair.

As usual, it helps to look at Solr for best practices when it comes to a Lucene application.

Solr Caches

Besides custom user caches, Solr has three types of built-in caches. If you need caching (and you usually do), setting up your caches properly can be very important for performance. If you are in a situation where caching is not very beneficial, say you pretty much never issue the same query twice, turning caching off can also help performance.

Each cache should be carefully considered:

- FilterCache – unordered document ids. This is for caching filter queries. This cache stores enough information to filter out the right documents across the whole index for a given query. Using set intersections on these filtered ids allows for efficiency in combining filter queries. This won’t cache the order of returned documents, so it’s no good for caching a query that relies on relevance or sort fields. If you are faceting with the FieldCache method (and you should be if you have a large number of unique fields), this should be set to at least the number of unique values in all the fields you are using for faceting (using the FieldCache method) .

- QueryCache – ordered document ids. This is for caching the results of normal queries. This can require much less RAM than the FilterCache because it only caches the returned documents, while the FilterCache must cache the results for the whole index. The optimal size of this cache depends on a lot of factors. Essentially, you want to make sure that it is large enough so that the majority of the results of your really common queries are cached.

- DocumentCache – stores stored fields. Solr caches Documents in memory so that no request has to hit the disk for stored fields. This can be very valuable as stored fields are most often used for hit list displays. The Solr Wiki recommends that you set the size of this cache to at least <max_results> * <max_concurrent_queries>, to ensure that Solr does not need to re-fetch a document during a request.

One of the cache settings to be mindful of is the autowarm value. The autowarm setting tells Solr how many entries to take from the old cache and put into the new one when a new view of the index is opened (due to an index change). The document cache cannot be autowarmed, but for the other caches, you want to use a value that is big enough to give your caches a nice boost in filling up, but not so big that it takes too long to warm the caches. The new view will not be available to users until the warming is done, so be sure to test to ensure you are warming in an acceptable time frame. You want to balance the autowarm count so that it is high enough that a fair portion of the cache is carried into the new Searcher, but its not so high that it takes too much time to warm a new Searcher for use.

It is also good idea to use the Solr admin webpage to look at your cache statistics. If you have a very low hit rate, your cache may be doing more harm than good. If you have a very high eviction rate, your cache is likely too small, and also may be doing more harm than good. If you have enough evictions, it is entirly possible that cached results are being tossed out before they are used, or after they are only used a handful of times. Check out the Solr Wiki on SolrCaching and be sure to use the appropriate settings for best performance.

This is not the first time we have needed to know things like how many unique values we might have in a field. A very useful tool for finding some of this information is the LukeRequestHandler that Solr provides. Simply hitting solr/admin/luke or solr/admin/luke?wt=xslt&tr=luke.xsl will display a variety of great statistics about your data. Don’t be afraid to slurp it in, look at things with the LukeRequestHandler, tweak what you have done, and then start all over. For large indexes, you might sacrifice some information by adding numTerms=0, solr/admin/luke?numTerms=0. This can turn a call that takes many minutes on a large index into seconds, for the price of less detailed term data.

Solr Faceting

Solr has an excellent and efficient faceting implementation, but it really pays to consider its effects on memory. Solr offers two main modes for faceting: FacetQueries and FacetFields.

- FacetQueries are handled by caching the results of a query as a filter. This FacetQuery set of documents is intersected against result sets to count how many documents a query condition is true for (the facet counts). If there are few enough results in the filter, the filter is maintained as a hashed set of document ids. If there are greater than the ‘hashDocSet’ setting results, a bit set is used instead.

- FacetFields allow for facet counts based on distinct values in a field. There are two methods for FacetFields, one that performs well with few distinct values in a field, and the other for when a field contains many distinct values (generally, thousands and up – you should test what works best for you).The first method, facet.method=enum, works by issuing a FacetQuery for every unuiqe value in the field. As mentioned, this is an excellent method when the number of distinct values in a field is small. It requires excessive memory though, and breaks down when the number of distinct values gets large. When using this method, be careful to ensure that your FilterCache is large enough to contain at least one filter for every distinct value you plan on faceting on.The second method uses the Lucene FieldCache (future version of Solr will actually use a different non-inverted structure – the UnInvertedField). This method is actually slower and more memory intensive for fields with a low number of unique values, but if you have a lot of uniques, this is the way to go. This method uses the FieldCache to look up the values for the given field for each document, and every time a document with a given value is found, the value has its count incremented.

Queries

You should try to keep in mind which types of queries are generally slower and consider their use carefully. Keep in mind, these are just generalities, but they are important to consider when designing your setup. So in general: The family of multi-term queries are obviously slower than term queries. FuzzyQuery in particular can be very slow because of the edit distance that it calculates for scoring and matching. It’s obvious, but also helpful to consider that the fastest queries will be those that match the fewest documents and are as close to being a simple term query as possible. A BooleanQuery adds a bit of its own overhead, and also combines the cost of its Query clauses. SpanQueries are more expensive than standard queries because they take positions into account, both for matching and scoring. The same is true of the phrase queries, but they do tend to be faster than Span queries. Finally, AND queries tend to be quite a bit faster than OR queries because skip-lists can be employed. Consider which types of queries you will allow from your users and which you are most likely to see. If Lucene/Solr’s default implentations do not adequately perform for your needs (let’s say you have to handle mainly complex wildcard queries), there are other options. For example, you can create a separate permuterm index for more efficient wildcard support.

ConstantScore queries are queries that just return a constant score for each document rather than a score derived from a relevance formula. On large indexes, they can also be dramatically faster than their non-constant score equivalents, with the tradeoff that they will not contribute to relevance (if you think they sound a lot like a filter, you are right – in fact they use a filter underneath the covers). Lucene 2.4 provides a ConstantScoreRangeQuery as well as a ConstantScoreQuery that takes a filter as an argument. If you use a QueryFilter, you can effectively turn any query into a ConstantScoreQuery. Solr actually provides even more ConstantScore queries, including ConstantScorePrefixQuery and ConstantScoreWildcardQuery. Solr 1.3 and on now uses the whole ConstantScore family by default for the built in query parsers.

On a large index, ConstantScore queries can be a good substitute for the familiy of multi-term queries: WildcardQuery, FuzzyQuery, RangeQuery, etc. Standard multi-term queries work by enumerating all matching terms in the index and then creating a BooleanQuery with each of those terms as a clause. If a lot of unique term matches are enumerated, the query can be rather slow. With a ConstantScoreQuery, rather than scoring each term in a multi-term query (which may not be very helpful), all matches are given a constant score, and no BooleanQuery is created. This avoids maxclause exception errors that are common with the queries that expand to BooleanQueries and they can be significantly faster on large indexes. In the next version of Lucene, all of the multi-term queries (wildcard,fuzzy,range,prefrix) will provide an option to use constant scoring rather than BooleanQuery expansion.

Lucene 2.4 will introduce a new range query called TrieRangeQuery. TrieRangeQuery allows for extremely efficient large scale numeric range queries and you should keep your eye out for this in the next release. It’s a large step forward in Lucene’s support for numeric range queries. Solr 1.4 is likey to include support for TrieRangeQuery as well.

Maximizing Throughput

When you start using Lucene and Solr on a server with many cores or processors, you might start running into certain known bottlenecks. I’m going to go over some of the more common issues that you should consider when trying to get the most out of Lucene/Solr using higher end hardware.

When designing a system with Lucene, you generally want to share a single IndexSearcher/IndexReader across multiple threads. IndexReader and IndexSearcher can essentially be used interchangeably because an IndexSearcher is basically a thin wrapper around an IndexReader. Due to a Sun JRE bug, the picture is more complicated on Windows, and you might get better performance on a multi core/processor system by using multiple IndexSearcher/IndexReader instances. However, it can be much more resource intensive to do this, especially if you are sorting on fields or doing something else that uses a FieldCache or other cached resources keyed to an IndexReader. For a large index, you want as few IndexReaders alive at a time as you can manage, so that more resources are available to use. The exception to this advice is when you want to warm up a new IndexSearcher before it is put into use so that the first search a user sees on a new Searcher is as fast as any other given search. In this case, the old Searcher should still service requests until the new one is ready to be put into service. Solr takes care of this type of management effectively behind the scenes.

In your quest for as few IndexReaders as possible, you are likely to run into a couple of known bottlenecks on a multi-core/processor machine. If you are careful to avoid these bottlenecks, you will see dramatic throughput increases on your server.

One bottleneck to avoid, which will maximize multi-core performance in Lucene, is to make sure that you open your IndexReaders in read-only mode. This removes a synchronization bottleneck mostly involving deletion checks, and ensures that you will get better concurrent throughput with multiple cores/threads. If you are used to dealing with IndexSearcher, this means creating an IndexReader instead and then creating an IndexSearcher with it. Solr 1.3+ uses read-only IndexReaders internally to ensure you get maximum performance out of the box.

Yet another synchronization bottleneck in Lucene/Solr can be avoided by using a non-Windows operating system. With Lucene, if you are on a non-Windows OS, you can use an NIOFSDirectory rather than a FSDirectory for a multi-threaded performance boost. As mentioned above, a bug in Sun’s Windows JVM keeps this optimization out of reach for Windows users, but this may be rectified in a future JRE update. Solr 1.3 does not yet take advantage of this feature, but Solr 1.4+ will auto detect your OS and use the right implementation for maximum performance.

Finally, when you get your hands on Solr 1.4, you might try using the alternate FastLRUCache cache implmentation rather than solr.LRUCache. The standard LRUCache uses synchronized ‘gets’ on the underlying Map which can cause a synchronization bottleneck with enough cores/processors/threads. The FastLRUCache provides unsynchronized ‘gets’ on the underlying Map, for the cost of an occasional cleanup operation. FastLRUCache is supposed to be better for high hit ratio caches (puts are more expensive, while gets are cheaper), so you should still consider using solr.LRUCache for a low hit ratio cache.

JVM Settings

Properly configuring your JVM can be a complicated topic and is best left to articles which focus on that task. Further, modern JVMs can be quite good at choosing default settings based on the detected hardware. The following sections, however, contain a few quick tips.

Choosing Xms Xmx

One strategy is to set a very low min memory and a high max memory. Run your Lucene/Solr application and monitor the JVM’s memory usage. Now set the minimum setting to what you see is the general usage – set the maximum to whatever you can afford to give, while leaving plenty of RAM for the OS, other applications, and most importantly, the file system cache. How much RAM you should leave is going to depend on a host of factors, including your OS, what other programs are running, how large your index is, etc. The operating system will use your excess RAM for caching access to the file system and a large index needs plenty of RAM available to this cache for optimal performance. All in all, for a large scale index, it’s best to be sure you have at least a few gigabytes of RAM beyond what you are giving to the JVM.

Use the server HotSpot VM

Ensure you are using the -server HotSpot VM. This is the best option for a long running server application that wants to maximize throughput. To check whether you are using the client or server HotSpot VM, type Java -version on the command line and look for ‘client’ or ‘server’. If you are using one of many Java JRE’s on your system, be sure to check the right one. Often, the JRE distribution does not come with the server HotSpot VM, but the JDK distribution generally does.

Checking Settings

A great way to see what JVM settings your server is using, along with other useful information, is to use the admin RequestHandler, solr/admin/system. This request handler will pump out a plethora of server statistics and settings.

The rest of your server

Ensure that your Solr and Lucene indexes are excluded from any indexing applications (Windows indexing service, desktop search apps, etc). It’s not likely that an indexing application would pick up Solr/Lucene index files as somethig that it understands and tries to parse, but it’s best to just exclude them. You want to be sure that external applications are not inspecting your index files as they change, especially when you are building a large index. Also be sure to exclude your indexes from any backup applications. Backups will likely be inconsistent unless done in cooperation with Solr/Lucene, and they can adversely affect performance. Lucene In Action 2 has released a free chapter on performing hot backups with Lucene. You can create a backup of a Solr index by simply setting up Solr replication.

Consider the other programs that need to run on the server and be sure they have enough RAM beyond what has been allocated to the Java JVM. Also be sure the OS has enough RAM to function, and that there is plenty of available RAM for the OS’s filesystem cache. There should be enough RAM available to cache key Lucene index files in memory – for a very large index, having at least a few gigabytes available would be best. Exactly how much you want is going to depend on a lot of factors, so take a look at the physical size of your index and figure that you want as much of that cached in RAM as you can reasonably get.

High Query Volume

Many applications hit a point where a single machine can still easily handle a given index size, but can’t keep up with a given query load. The proper way to handle this situation is to replicate the index to other servers, and then load balance requests across the servers, all of which contain a ‘copy’ of the index. Copies then can be updated over time as the ‘master’ version of the index changes.

Replicating with Lucene

While merges do take place, every time you add a new batch of docs to Lucene/Solr, a new index segment is created. When copying (replicating) the index to another server, you often only need to copy the new smaller segment.

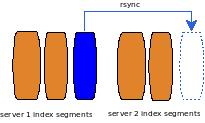

Lucene replication is a mostly a do-it-yourself affair. The ‘best practice’ technique is to take advantage of Lucene’s index file semantics. Lucene indexes are made up of 1-n individual segments. A write once scheme is used, so that each segment’s files do not change on index updates. Instead, new files are created, and then the index is atomically told to point at the old files that have not changed and any new files that were created. This setup works well with index replication because it’s quite easy to use something like rsync to efficiently replicate index changes – you can just copy the new files. For example, upon adding a few documents to an index that already has millions of documents, a new segment containing the few new documents will be written, and often, only this segment will need to be replicated to the other machines. While segment merging will affect which segments need to be copied, many times there will be large unchanged segments, allowing for efficient copying of small index deltas.

So a classic configuration would be to have a master for adding and updating documents on, and then n slave servers that you would replicate the master index to (actually just the changed files in the index).

When the time and bandwidth needed for replication is less of a concern, and high query throughput is more important, it can be wise to abandon the advantage of transferring changed segments and only replicate fully optimized indexes. It costs a bit more in terms of resources, but the master will eat the cost of optimizing (so that users don’t see the standard machine slowdown affect that performing an optimize brings), and the slaves will always get a fully optimized index to issue queries against, allowing for maximum query performance. Generally, bandwidth for replication is not much of a concern now, but keep in mind that optimizing on a large index can be quite time consuming, so this strategy is not for every situation.

This diagram shows a single master with two slaves. The master will receive all updates to the index, and will replicate these updates to the slaves at key points. Often, the best times are after an optimization or a batch of index updates.

Replicating with Solr

The best example to look at for Lucene index replication is actually Solr, which has both a unix/rsync/script solution that relies on hard links to take efficient snapshots of the index, as well as a new all Java solution that takes advantage of Lucene’s pluggable IndexDeletionPolicy to maintain snapshots of the index. The script replication is pretty hardened and has worked well for some time now. The new all Java replication will first be available in Solr 1.4, and while still in its early hardening phase (it’s still new after all), it’s certainly a feature many users are anticipating.

In the Solr model, there is a Master server which handles all updates, and 1-n slave servers that handle all queries. The Master occasionally takes ‘snapshots’ of the index, literally freezing a view of the index in time. The slaves then poll the Master, asking if there is a new snapshot to download. If there is, any changed files will be transferred from the Master to the Slave and Solr will open a new view on the updated index (with cache autowarming and everything else that normally goes on with a single machine index view update). You want to be sure to carefully configure your setup so that replication will have ample time to complete before a new replication is triggered. In practice, depending on your hardware and index, you don’t want less than a minute. Ffor a very large index or low bandwidth environment, the time needed to replicate could be longer.

Using this model, Solr can scale horizontally with ease. Just add more slaves as necessary to handle any given load and then you setup a load balancer to assign a single virtual IP address that resolves to the IP address of each of the slaves as requests come in.

Full instructions for replicating with Solr are available on the Solr Wiki: Unix script replication, pure Java replication.

If you choose to use the script based replication, be aware that the Java JVM will launch some of the scripts. This is not something to worry about unless you run into the problem, but when the JVM launches a new process, it will use the Operating System’s preferred method for creating a new process. On Unix systems, this method is generally the fork call. The fork call will usually try to allocate as much memory as the current process is using – this memory won’t be used, as an exec is coming next to launch the script. The Operating System may think you are going to use that requested RAM though, so if your JVM is using 5 gigabytes of RAM, its going to request another 5 gigabytes to launch a simple, small script. Again, the memory is not needed or used, but you can get an Out of Memory exception if you don’t have the required RAM and your operating system does not specifically address this issue by default. This is not a new problem in the world of fork and one of the workarounds out there is something called memory overcommit combined with copy-on-write. In this mode, RAM allocation requests may be granted even if they cannot be filled. The out of memory problems will just happen later, if you do try and use too much RAM. That’s the copy-on-write part. The forked process’ memory is shared with the parent process until it attempts to modify it, when it is copied. If you are having troubles with this, you might check that your OS is set to overcommit memory. As an example case: Linux often comes set to allow memory overcommit in certain situations, but not for wildly large requests (it won’t likely allow an overcommit of 5 gigabytes). A simple heuristic is used to determine if the overcommit should be granted. You may need to change your OS settings to always overcommit if you find yourself with OOM problems when Solr tries to launch a script.

Large Index Sizes

Some indexes get so large that a single machine cannot adequately contain them. At tens of millions of documents and up you might run into this scenario, and the general solution is to break the index up so that a pieces of it are located on multiple servers. A single search can then be issued to each server and the results can be pulled (all likely in parallel), and then combined into a single result set for the user. Lucene has a couple classes to help you get started with distributed search, and Solr provides a simple, full blown solution that can scale to billions of documents.

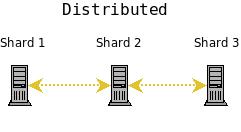

In a distributed configuration, one server ‘shard’ will get a query request and then search itself, as well as the other shards in the configuration, and return the combined results from each shard.

Distributed Lucene

Lucene’s distributed support is not extensive, but sufficient tools are available. Lucene provides a RemoteSearchable implementation that allows for distributed search with either a MultiSearcher or more likely a ParallelMultiSearcher. Rather than search a handful of local Searchables with a MultiSeacher, you can use the MultiSearcher to search across a number of RemoteSearchables, each pointing to a different server. Just as with a local MultiSearcher search, each sub Searchable will be searched, and the results combined. This method of scaling has been used for many distributed setups, but it is not an ideal solution and suffers from excessive chatter between servers, stunting truly large scale scalability. For many, it’s a simple and adequate solution though. Like I said, distributed search has not been a focus of the Lucene project, so you’re likely to run into plenty of situations where you will be writing some code. In fact, RemoteSearchable is really just a piece of the clever infrastructure that you’ll likely need to develop for a truly workable solution. As is often the case, it might be best to look at Solr for best practices in distributing Lucene. Keep in mind that there are other approaches out there and in use.

Distributed Solr

Solr provides an extremely simple, extremely scalable, distributed solution out of the box. As I mentioned in the introduction, Lucene is killer core IR technology, and Solr is a search server built on top (with some of its own killer technology – see faceting in particular). Solr includes deceptively simple distributed support built on top of Lucene.

Building a distributed Solr server farm is as simple as installing Solr on each machine. Solr refers to each server in a distributed setup as a ‘shard’ and your server farm will be made up of 1-n shards.

Its up to you to get all of your documents indexed on each ‘shard’ of your server farm. There is no out of the box support for distributed indexing, but your method can be as simple as a round robin technique: Index each document to the next server in the circle. A simple hashing system would also work, and the Solr Wiki suggests uniqueId.hashCode() % numServers as an adequate hashing function.

Keep in mind that Solr does not calculate universal term/doc frequencies. At a large scale, its not likely to matter that tf/idf is calculated at the shard level – however, if your collection is heavily skewed in its distribution across servers, you might take issue with the relevance results. Its probably best to randomly distribute documents to your shards.

Once you have your documents indexed to each shard, searching across multiple shards is dead simple:

http://localhost:8983/solr/select?shards=localhost:8983/solr,localhost:7…

You simply add a shards parameter that contains each shards URL, comma separated. This will cause the select RequestHandler to search each of the listed URLs indepently and then combine the results as if you had issued one search across one large index. You should load balance requests across each of the servers. It’s generally best to avoid using the URL to specify your shards, though. If you have set up a lot of shards, or you just don’t want to deal with a bunch of URLs in a Solr GET request, its much easier to set the shards parameter for your SearchHandler in solrcofig.xml. That way you can set it once and effectively forget about it for a while.

Any RequestHandler that extends SearchHandler can use SearchComponents and perform a distributed search. However, only SearchComponents that are ‘distributed aware’ work with distributed searches. The current components that support distributed search are:

- The Query component that returns documents matching a query

- The Facet component, for facet.query and facet.field requests where facets are sorted by count (the default). <Solr 1.4> The next version of Solr will also support sorting by name.

- The Highlighting component

- the Debug component

For best results, you will want to load balance incoming requests across each of the shards. Each request that hits a shard will be distributed by that shard to itself and the other shards and then the results are merged. You want to be sure to distribute that duty evenly across your shards. Be careful of the deadlock warning in the Solr Wiki if you do this though. You need to be sure that the number of threads serving http requests in your container is greater than the number of requests you can get from the shard itself, and all of the other shards in your configuration, or you may experience a deadlock.

Get the full details on setting up distributed search with Solr at the Solr Wiki.

Large Index Size and High Query Volume

When your index is too large for a single machine and you have a query volume that single shards cannot keep up with, it’s time to replicate each shard in your distributed search setup. The ideas here can be used with a pure Lucene system, but I’ll focus on Solr, as it is already targeted for this type of use.

The idea is to combine distributed search with replication. Take a look at the Distributed and Replicated figure. There will be a ‘master’ server for each shard and then 1-n ‘slaves’ that are replicated from the master. This allows the master to handle updates and optimizations without adversely affecting query handling performance. Query requests should be load balanced across each of the shard slaves. This gives you both increased query handling capacity and fail over backup if a server goes down.

With distribution and replication, none of the master shards know about each other. You index to each master, the index is replicated to each slave, and then searches are distributed across the slaves, using one slave from each master/slave shard.

For high availability you can use a load balancer to set up a virtual IP for each shard’s set of slaves. If you are new to load balancing, HAProxy is a good open source software load balancer. If a slave server goes down, a good load balancer will detect the failure using some technique (generally a heartbeat system), and forward all requests to the remaining live slaves that served with the failed slave. A single virtual IP should then be set up so that requests can hit a single IP, and get load balanced to each of the virtual IPs for the search slaves.

With this configuration you will have a fully load balanced, search side fault tolerant system (Solr does not yet support fault tolerant indexing). Incoming searches will be handed off to one of the functioning slaves, then the slave will distribute the search request across a slave for each of the shards in your configuration. The slave will issue a request to each of the virtual IPs for each shard, and the load balancer will choose one of the available slaves. Finally the results will be combined into a single results set and returned. If any of the slaves go down, they will be taken out of rotation and the remaining slaves will be used. If a shard master goes down, searches can still be served from the slaves until you have corrected the problem and put the master back into production.

Conclusion

For most applications, if you start developing a scalable solution with Lucene, you begin to build a home brew search engine. This is usually not wise. Lucene attempts to be more of a toolkit, while Solr looks to be more of an end-to-end search solution. So why talk about scaling Lucene as well as Solr? You might need to scale Lucene if you inherit legacy code or have specific requirements that prevent you from using Solr. In general though, there is a fair amount of work involved to scale Lucene properly across multiple machines. Solr has done much of this, as well as a lot of other higher level work, and it is wise to take advantage of it. However, understanding how Lucene works and scales is an important part of understanding Solr’s inner workings and scalability as well. Remember, Lucene provides the tools to build a highly scalable search solution, while the Lucene sub project, Solr, uses Lucene to build such a solution.

Hopefully, you now see why I started with maximizing the performance of a single machine. It’s a bit obvious, but even if you start with requirements that push you beyond a single server right away, knowing how to maximize performance on a single machine is still very important. Both replication and distribution effectively turn into individual searches against each individual server (which are then combined in the distributed case). Most of the fruitful efforts in maximizing performance for distributed and replicated search are therefore the same as those for maximizing performance on a single machine.

I hope I have shown that Lucene and Solr both prove to be highly scalable search solutions. There is likely still plenty of exploring and testing that you will have to do for your unique requirements when it comes to a large scale installation, but hopefully you now have a little more direction for your journey. I think you will be amazed at Lucene/Solr’s performance even with just out-of-the-box settings – however, if properly tweaked and configured, Lucene/Solr can really fly on extremely large collections. With the proper configuration, scaling from millions to billions of documents with sub second response times, even under high load and reliability requirements, is very achievable.

Links

- SolrPerformanceFactors – A list of common things to consider when thinking about Solr performance.

- NIOFSDirectory – Lucene Directory implementation that allows for faster multi-core/processor performance on non-Windows operating systems.

- FSDirectory – Standard Lucene Directory implementation.

- FieldCache – Lucene cache of document field values / ordinals

- IndexDeletionPolicy – Lucene class that allows custom control over deletion of old index views.

- FieldSelector – Lucene class that allows selective field loading.

- RemoteSearchable – Lucene class that allows for distributing indexes across servers.

- Lucene Benchmark Contrib – great framework for benchmarking Lucene changes and settings.

- Lucene: Improve Searching Speed – General tips for improving Lucene search performance.

- TrieRangeQuery – Efficient large scale numerical range search.

- Solr Wiki: Distributed Search – More about Solr’s excellent distributed search features.

- Solr Wiki: Collection Distribution – Solr index replication with Unix scripts.

- Solr Wiki: Solr Replication – Solr index replication using a Java implementation.

- Solr Wiki: Solr Caching – Learn more about Solr’s cache support.

- Solr Wiki: LukeRequestHandler – Explore your index data.

- SystemInfoHandler – Quickly get info about Solr and your server.

- Solr Faceting – An overview of Solr’s faceting features.

- Java Performance – Performance tips for Java from Sun

- Hot Backups with Lucene – Information on how to safely back up your Lucene indexes.

- Solr Wiki: Writing Distributed Search Components – Getting started writing your distributed SearchComponents with Solr.

- Sun JVM bug: Single FileChannel slower than using multiple FileChannels (windows).

- LUCENE-1483 – Change IndexSearcher multisegment searches to search each individual segment using a single HitCollector.

- SOLR-739 – Add support for OmitTf() to Solr.

- Skip Lists – Faster postings list intersection via skip pointers.

- Permuterm indexes – Efficient wildcard queries.

- BiWords – Efficient phrase searching

- HAProxy – An open source software load balancer

LEARN MORE

Contact us today to learn how Lucidworks can help your team create powerful search and discovery applications for your customers and employees.