Indexing PDF for OSINT and Pentesting

By Alejandro Nolla – @z0mbiehunt3r

Most of us, when conducting OSINT tasks or gathering information for preparing a pentest, draw on Google hacking techniques like site:company.acme filetype:pdf “for internal use only” or something similar to search for potential sensitive information uploaded by mistake. At other times, a customer will ask us to find out if through negligence they have leaked this kind of sensitive information and we proceed to make some google hacking fu.

But, what happens if we don’t want to make this queries against Google and, furthermore, follow links from search that could potentially leak referrers? Sure we could download documents and review them manually in local but it’s boring and time consuming. Here is where Apache Solr comes into play for processing documents and creating an index of them to give us almost real time searching capabilities.

What is Solr?

Solr is a schema based (also with dynamic field support) search solution built upon Apache Lucene providing full-text searching capabilities, document processing, REST API to fetch results in various formats like XML or JSON, etc. Solr allows us to process document indexing with multiple options regarding how to treat text, how to tokenize it, convert (or not) to lowercase automatically, build distributed cluster, automatic duplicates document detection and so on.

Setting up Solr

There is a lot of stuff about how to install Solr so I’m not going to cover it here, just specific core options for this quick’n dirty solution. First thing to do is create a core config and data dir, in this case I created /opt/solr/pdfosint/ and /opt/solr/pdfosintdata/ to store config and document data respectively.

To set the schema up just create /opt/solr/pdfosint/conf/schema.xml file with following content:

schema.xml content for pdfosint core

<!--?xml version="1.0" encoding="UTF-8" ?-->

<?xml version="1.0" encoding="UTF-8" ?>

<schema name="pastebincom" version="1.5">

<fields>

<field name="id" type="uuid" indexed="true" stored="true" default="NEW" multiValued="false" />

<field name="text" type="text_general" indexed="true" stored="true"/>

<field name="timestamp" type="date" indexed="true" stored="true" default="NOW" multiValued="false"/>

<field name="_version_" type="long" indexed="true" stored="true"/>

<dynamicField name="attr_*" type="text_general" indexed="true" stored="true" multiValued="true"/>

</fields>

<types>

<fieldType name="string" class="solr.StrField" sortMissingLast="true" />

<fieldType name="long" class="solr.TrieLongField" precisionStep="0" positionIncrementGap="0"/>

<fieldType name="date" class="solr.TrieDateField" precisionStep="0" positionIncrementGap="0"/>

<fieldType name="uuid" class="solr.UUIDField" indexed="true" />

<fieldType name="text_general" class="solr.TextField" positionIncrementGap="100">

<analyzer type="index">

<tokenizer class="solr.WhitespaceTokenizerFactory"/>

<filter class="solr.LowerCaseFilterFactory"/>

</analyzer>

<analyzer type="query">

<tokenizer class="solr.WhitespaceTokenizerFactory"/>

<filter class="solr.LowerCaseFilterFactory"/>

</analyzer>

</fieldType>

</types>

</schema>

Just a quick review of config for schema.xml, I specified an id field to be unique (UUID), a text field to store text itself, timestamp to be set to the date when the document is pushed into Solr, version to track index version (internal Solr use to replicate, and so) and a dynamic field named attr_* to store any no specified value in schema and provided by parser. Last, I specified how to treat indexing and querying, for tokenize I use whitespace (splice words based just on whitespace without caring about special punctuation) and convert it to lowercase. If you want to know more about text processing I would recommend Python Text Processing with NLTK 2.0 Cookbook as an introduction, Natural Language Processing with Python for a more in-depth usage (both Python based) and Natural Language Processing online course available in Coursera.

Next step is notifying Solr about new core, just adding to /opt/solr/solr.xml/

New core for PDF indexing

<cores> ... <core name="pdfosint" instanceDir="pdfosint"/> </cores>

Now only left to provide Solr with binary document processing capabilities through a request handler, in that case, only for pdfosint core. For this create /opt/solr/pdfosint/solrconfig.xml (we can always copy provided example with Solr and modify when needed) and specify request handler:

Setting up solr request handler for binary documents

<requestHandler name="/update/extract" class="org.apache.solr.handler.extraction.ExtractingRequestHandler" >

<lst name="defaults">

<str name="fmap.content">text</str>

<str name="lowernames">true</str>

<str name="uprefix">attr_</str>

<str name="captureAttr">true</str>

</lst>

</requestHandler>

A quick review of this, class could be changed depending on version and classes names, fmap.content specify to index extracted text to a field called text, lowernames specify converting to lowercase all processed documents, uprefix specifies how to handle field parsed and not provided in schema.xml (in that case use dynamic attribute with a suffix of attr_) and captureAttr to specify indexing parsed attributes into separate fields. To learn more about ExtractingRequestHandler please go here.

Now we have to install the required libraries to do binary parsing and indexing, for this, I have created /opt/solr/extract/ and copied solr-cell-4.2.0.jar from dist directory inside of Solr distribution archive and also copied to the same folder everything from contrib/extraction/lib/ again from distribution archive.

At last, adding this line to /opt/solr/pdfosint/solrconfix.xml to specify from where load libraries:

... <lib dir="/opt/solr/extract" regex=".*.jar" /> ...

To know more about this process and more recipes, I strongly recommend Apache Solr 4 Cookbook.

Indexing and digging data

Now we have an extracting and indexing handler at http://localhost:8080/solr/pdfosint/update/extract/ so we only need to send the PDFs to Solr and analyze them. The easiest way once downloaded (or maybe fetched from a meterpreter session? }:) ) is sending them with curl to Solr:

$ for i in ls /tmp/pdf/*.pdf; do curl "http://localhost:8080/solr/pdfosint/update/extract/?commit=true" -F "myfile=@$i"; done;

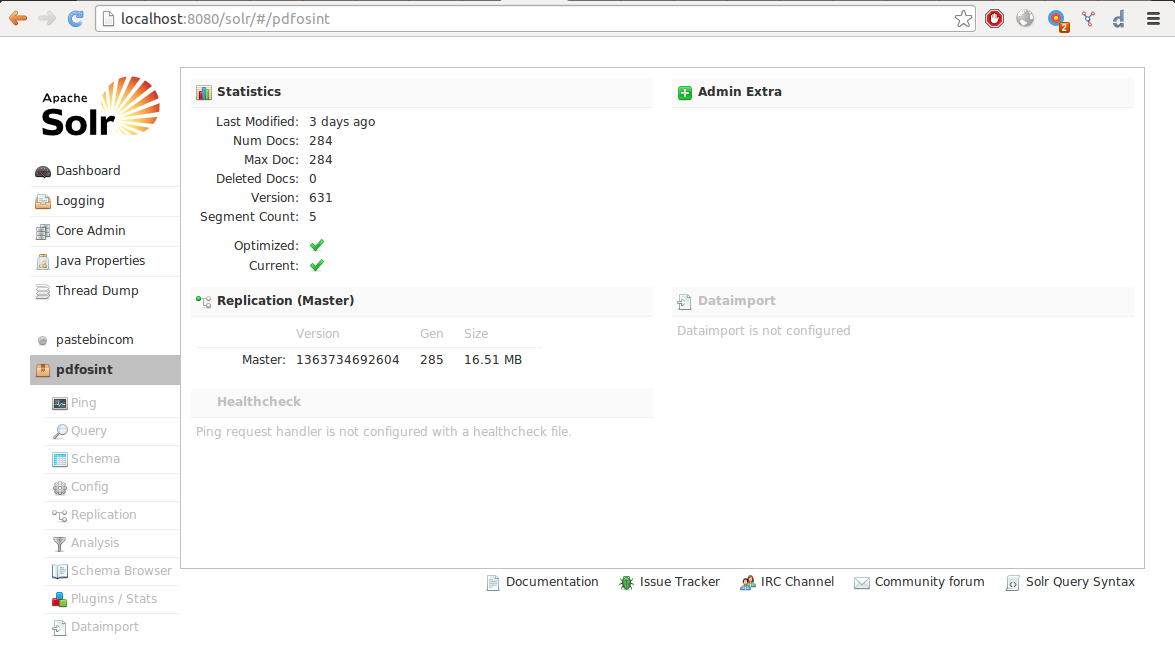

After a while, depending on several factors like machine specs and documents size, we should have an index like this:

So now we try a query to find documents with phrase “internal use only” and bingo!:

It’s important to have in mind the fact that Solr will split words and treat them before indexing when doing queries, to see how a phrase should be treated and indexed by Solr when submitted we can do an analysis with the built-in interface:

I hope you find this useful and give it a try, see you soon!

Original post by Alejandro Nolla – @z0mbiehunt3r can be found here.