What Are Question-Answering Systems?

Last year at the annual Activate conference, the line for the presentation Enriching Solr with Deep Learning for Question Answering Systems was out the door. A webinar in the depth of December on the same topic had more than 600 attendees. So what is this pent-up interest in Question Answering Systems — and what are they anyway? And do they really work?

Alan Turing once said, “I propose to consider the question, Can machines think?”

Artificial Intelligence has become an important part of our everyday lives, from self-driving cars, mobile banking to machine translation services. AI as a technology or a system helps emulate human behavior typically by learning, appearing to understand complex content. However, understanding the components and/or process for delivering AI capabilities is not as straightforward.

So let’s take a look at how we’ve trained machines to think like us and understand more about the question-answering technology.

Early Question-Answering Systems

Question-Answering systems (QA) were developed in the early 1960s. Two of the earliest QA systems, BASEBALL and LUNAR were successful due to their core database or knowledge system. Designed to answer questions about the US baseball league over a period of one year, BASEBALL easily fielded questions like where did each team play on July 7. LUNAR covered questions about the geological analysis of rocks returned by the Apollo moon mission.

These earlier systems focused on “closed domains” where any question asked has to be about the specific domain or have a limited vocabulary. The success of BASEBALL and LUNAR were largely due to the knowledge each system possessed. The next 20 years saw the development of open domain systems that focused more on information-retrieval.

Simplifying the Question-Answering Process

But when you are dealing with the public, closed domains aren’t really viable. Language is dynamic and people have what can seem to be an infinite amount of ways to ask a question. The information retrieval-based QA system then paved the way for a new search experience. Based on factoid questions (questions that are answered with simple facts) the system treats questions as search queries to extract the most relevant answer.

So how are these systems able to sort through all of the information available and give us millions of answers in under a second?

The information-retrieval process QA system is broken down into 3 stages: question processing, passage retrieval and ranking, and extraction.

Understanding Information-Retrieval Based Queries

Let’s look at an example by The Cambridge University Press: A user wants to know which Shakespeare plays contain the words Brutus and Caesar and not Calpurnia (the query). For each of Shakespeare’s plays, it is marked if the play contains each word out of all the words Shakespeare used. Then a binary term-document incidence matrix is produced.

To retrieve the answer of the query, we can look at the matrix in terms of rows or columns and reference the vector for each term, which shoes the documents each term appears in. To answer the query Brutus AND Caesar AND NOT Calpurnia, we take the vector for each term (110100 AND 110111 AND 10111= 100100) and use it to pull the relevant answers:

Anthony and Cleopatra, Act III, Scene ii

Agrippa [Aside to Domitius Enobarbus]:

Why, Enobarbus,

When Anthony found Julius Caesar dead,

He cried almost to roaring, and he wept

When at Philippi he found Brutus slain.

Hamlet, Act III, Scene ii

Lord Polonius:

I did enact Julius Caesar: I was killed i’ the

Capitol; Brutus killed me.

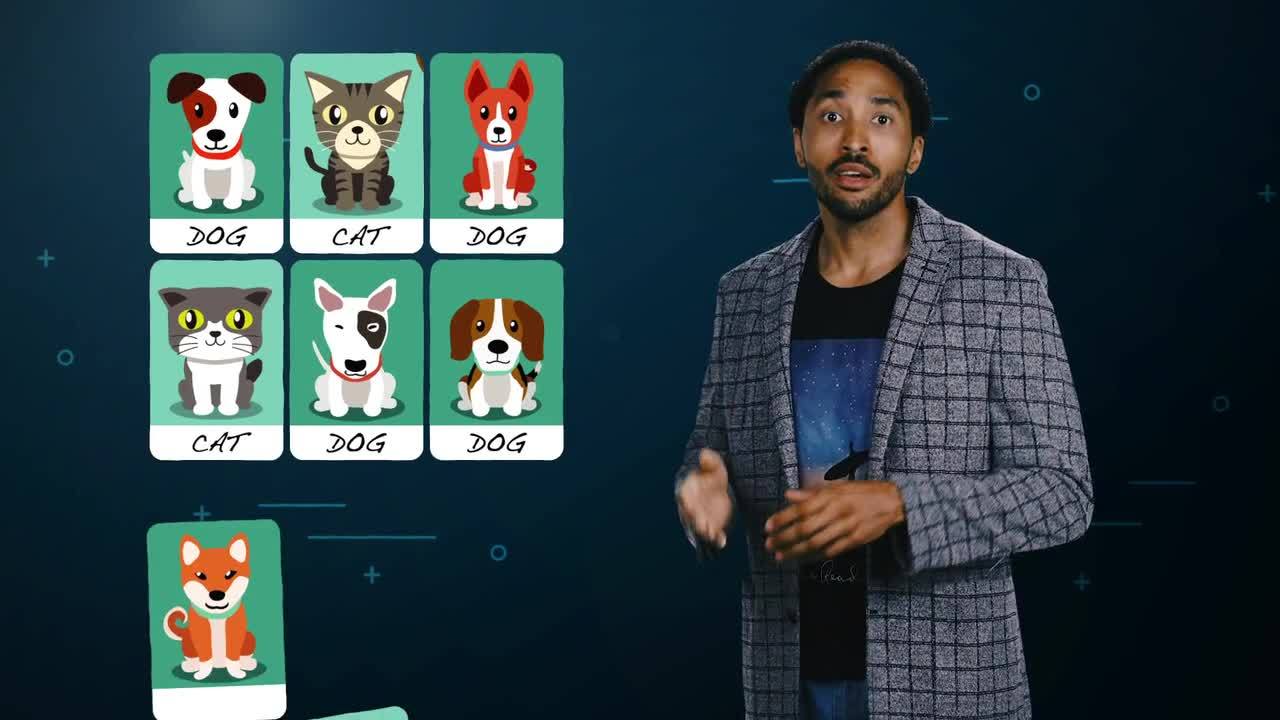

How Do We Teach Machines to Learn?

The machine learning process has steps similar to the human learning process. It is a mix of human and algorithmic activities and typically has the following steps:

- Curation and preparation of the training/test data. Today, this can be achieved by a mix of approaches from human-only curation to more automated solutions. Without good training data, machine learning is not effective or possible.

- Loading the training data into an untrained model so the algorithm may train the model.

- The training phase refines the manner in which the model learns. This process may include human activities such as changing the training data, or choosing a different model type or various hyperparameter settings. This process is increasingly being automated.

For the learning process to be successful, application leaders must realize that building a machine learning model is not like developing an application. The model’s performance will degrade over time as the data changes and will need to be maintained. “Outliers” in the training data need to be identified and removed to ensure the model continues to perform.

Chatbots and the Challenges of the Technology

Chatbots are able to communicate with us through Natural Language Processing or NLP. The intelligent technology has only grown over the years with Cortana, Alexa and Google. All of which have learned how to take voice commands and convert them into text.

One professional in the industry, Chao Han, believes that “people usually think QA systems are not related to chatbots, but they can be the backbone of chatbot system. Current chatbot systems are hard coded rules, but if QA system is used to search on existing FAQs, a lot more questions can be answered.”

Although in the early stages of maturity, the technology has seen tremendous improvements over the years. With more experience, sentiment analytics are maturing and gaining more momentum within customer journey analytics, marketing, and building customer 360-degree views.

But we’ve all been there. Trying to reach your doctor about that ongoing rash, but can’t seem to get the automated voice on the phone to understand your name.

NLP Techniques Improve Performance

Applied NLP techniques, like extraction, sentiment analysis and translation services, help provide chatbots and VPAs more enhanced quality of support and services to end users. The speed of real-time analytics enables bots to raise alert levels when a customer is about to get irate — thanks to sentiment analytics — prompting a human operator to take over the chat or call.

My colleague Carlos Valcarcel is a solutions engineer– and is on the front line of fielding questions. He explains when someone makes a request like: help me open a savings account – it is a question about workflow. The chatbot will walk them through the various steps needed to complete the task. when some asks: how do I open a savings account? they simply want an answer. Carlos tells me, “the QA system answers the question and learns over time which answers are the better answers.”

In recent times, improving the technology has been centered around building more accurate classifiers to combat some of the main challenges that include analyzing tone, identifying irony and sarcasm, and understanding the context and polarity of the conversation.

Natural Language Search Engine

Similar to QA systems, most enterprises are not aware of the resources needed to keep a chatbot model deployed. It requires a natural language search engine that can parse entities, handle keyword matching and be able to handle deep learning.

Deep Learning Video

It also requires ongoing attention to continuously evaluate the performance of the model and add domain-specific expertise to the chatbot. The enterprise needs to devote resources to model tuning and monitoring management on an ongoing basis. Failure to allocate these assets will result in a rapid decline in chatbot performance. And that won’t make anyone happy.

Yet with the right maintenance and implementation of the technologies, these systems can appeal to customers and improve their experience through the customer journey all the way to purchase.

Jessica Taylor is a Marketing Coordinator Intern at Lucidworks. She is working toward a degree in International Business and has an interest in technology’s impact throughout the customer journey

LEARN MORE

Contact us today to learn how Lucidworks can help your team create powerful search and discovery applications for your customers and employees.