Lenovo’s annual revenue contribution through search increased 175% by switching to Fusion

We don’t have to go in and validate that results are good, our customers are telling us the results are good.”

Marc Desormeau

Global Search Lead

Lenovo

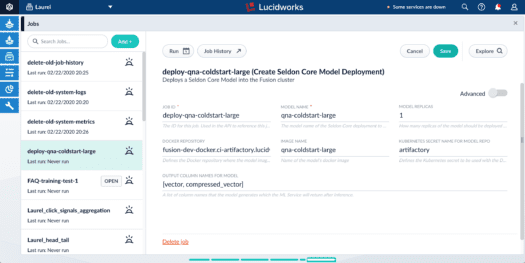

Deploy Models with Seldon Core

The Data Science Toolkit Integration (DSTI) utilizes Seldon Core behind the scenes to quickly and easily package your custom machine learning models and deploy them with familiar tools such as Jupyter and DockerHub. Seldon Core packages any and all custom built models into a Docker image that is then digestible by Lucidworks and can be deployed as pods into your Kubernetes infrastructure.

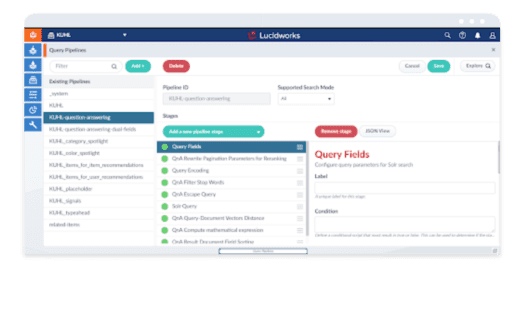

ML-Enabled Index and Query Pipelines

The models deployed through Seldon Core can be added in a machine learning stage to any index or query pipeline. Extract and enrich product data on ingest in an index pipeline or classify queries and predict user intent with models in a query pipeline. Through the DSTI, your custom models are fully integrated without any complex configuration required.

Easily Scale Machine Learning Deployments

Once the machine learning model is deployed, all DevOps and model maintenance burdens are removed. Seldon Core will automatically balance the workload or traffic between deployed model replicas and the foundational Kubernetes infrastructure will ensure your model pods are happy and healthy. The Seldon Core service communicates with the Fusion ML service to keep track of the available models. Finally, the machine learning stages within pipelines interact with the ML Service and Seldon Core via GRPC protocol to rapidly translate model inputs and outputs to keep your pipelines operating at blazing speeds.

Ecommerce Customer Expectations Are Changing

Sin City Improves Search

Las Vegas travel search engine Vegas.com increased page views and engagement by 63% . Bounce rates for the mobile site dropped 8%. Conversion rates from search to a reservation or ticket increased by 33%. The team has since built the desktop search experience with Fusion as well. Full case study…

Foot Locker Gets The Perfect Fit

The American sportswear and footwear retailer put Lucidworks to work and saw a 10% lift in add-to-cart, improved personalization, and better analytics to drive merchandising decisions for promotional events.

Gartner Report: Use Personalization to Enrich Customer Experience and Drive Revenue

Research from Gartner reveals the ROI of personalization.

Explore More Key Features

Recommendation Models

Leverage shopper signals to generate AI-powered recommendations. Learn more

Semantic Vector Search

Retrieve relevant results to challenging queries with Semantic Vector Search. Learn more

Predictive Merchandiser

Merchandise and curate digital experiences with Predictive Merchandiser. Learn more